When running an EKS cluster, you might face two issues:

- Running out of IP addresses assigned to pods.

- Low pod count per node (due to ENI limits).

In this article, you will learn how to overcome those.

Before we start, here is some background on how intra-node networking works in Kubernetes.

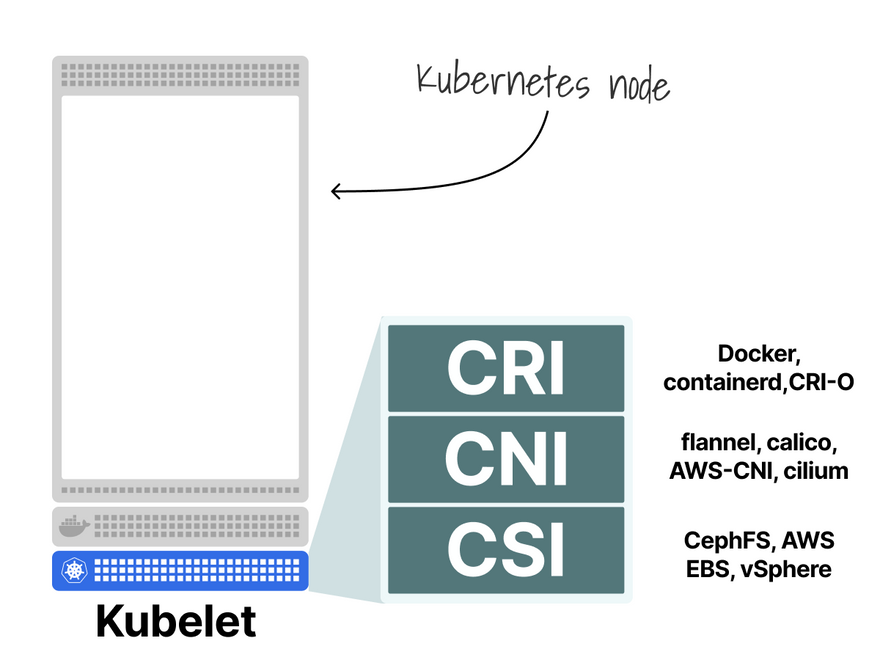

When a node is created, the kubelet delegates:

- Creating the container to the Container Runtime.

- Attaching the container to the network to the CNI.

- Mounting volumes to the CSI.

Let's focus on the CNI part.

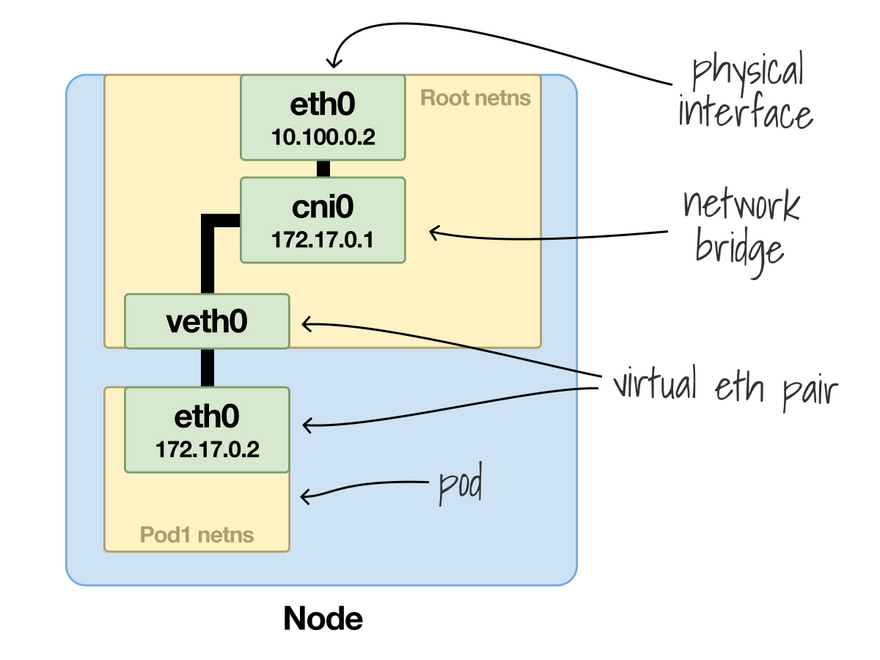

Each pod has its own isolated Linux network namespace and is attached to a bridge.

The CNI is responsible for creating the bridge, assigning the IP and connecting veth0 to the cni0.

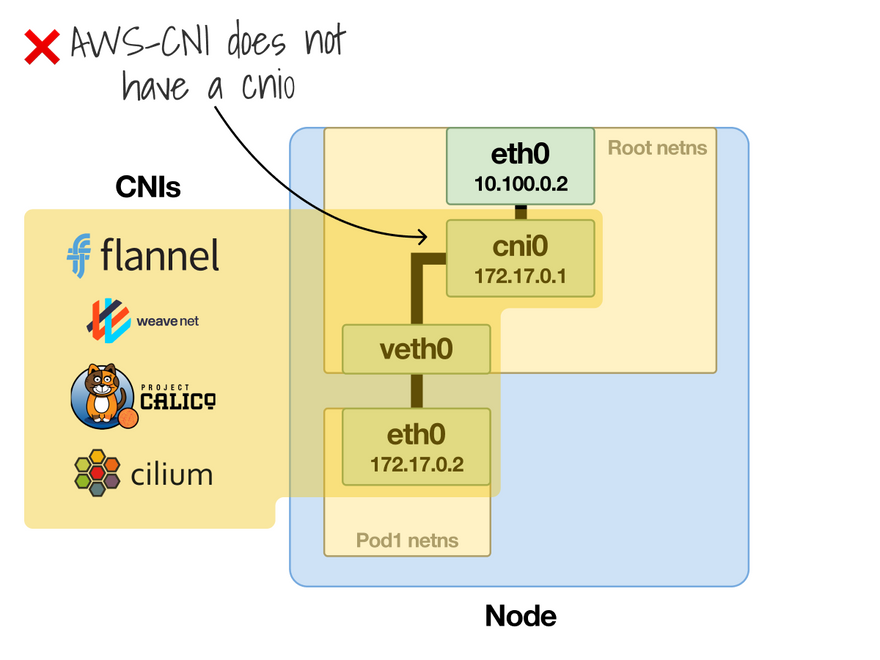

This usually happens, but different CNIs might use other means to connect the container to the network.

As an example, there might not be a cni0 bridge.

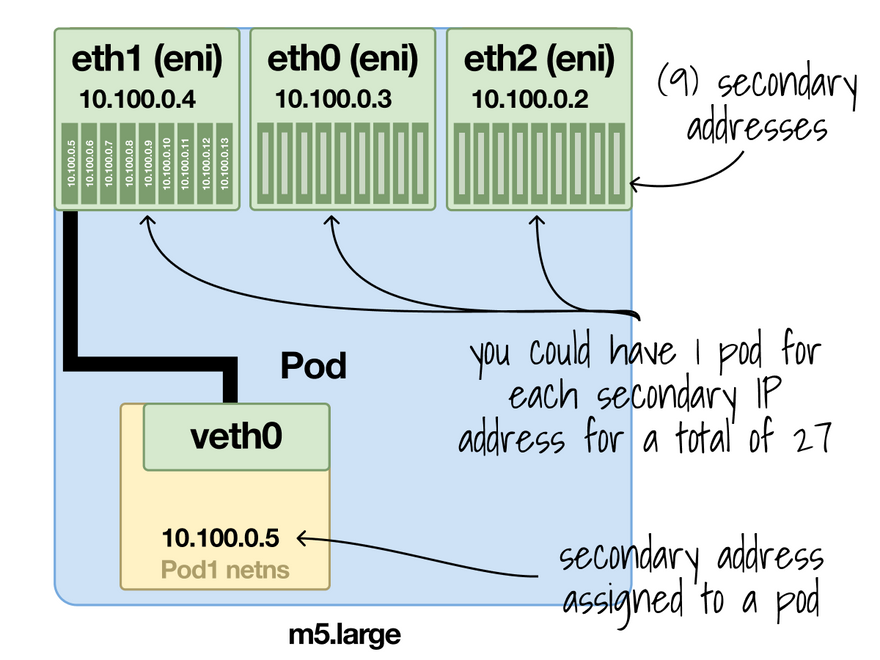

The AWS-CNI is an example of such a CNI.

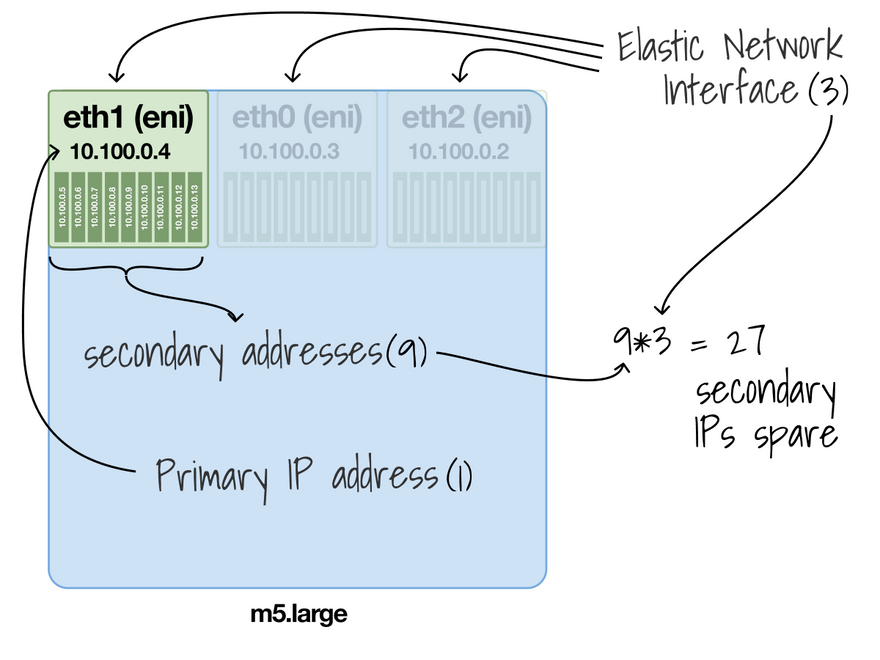

In AWS, each EC2 instance can have multiple network interfaces (ENIs).

You can assign a limited number of IPs to each ENI.

For example, an m5.large can have up to 10 IPs for ENI.

Of those 10 IPs, you have to assign one to the network interface.

The rest you can give away.

Previously, you could use the extra IPs and assign them to Pods.

But there was a big limit: the number of IP addresses.

Let's have a look at an example.

With an m5.large, you have up to 3 ENIs with 10 IP private addresses each.

Since one IP is reserved, you're left with 9 per ENI (or 27 in total).

That means that your m5.large could run up to 27 Pods.

Not a lot.

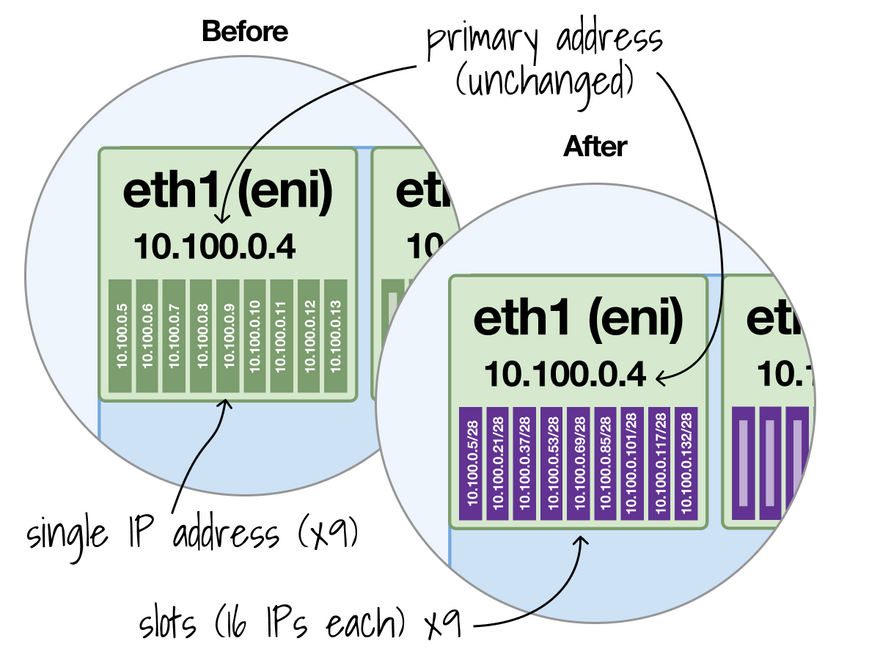

But AWS released a change to EC2 that allows "prefixes" to be assigned to network interfaces.

Prefixes what?!

In simple words, ENIs now support a range instead of a single IP address.

If before you could have 10 private IP addresses, now you can have 10 slots of IP addresses.

And how big is the slot?

By default, 16 IP addresses.

With 10 slots, you could have up to 160 IP addresses.

That's a rather significant change!

Let's have a look at an example.

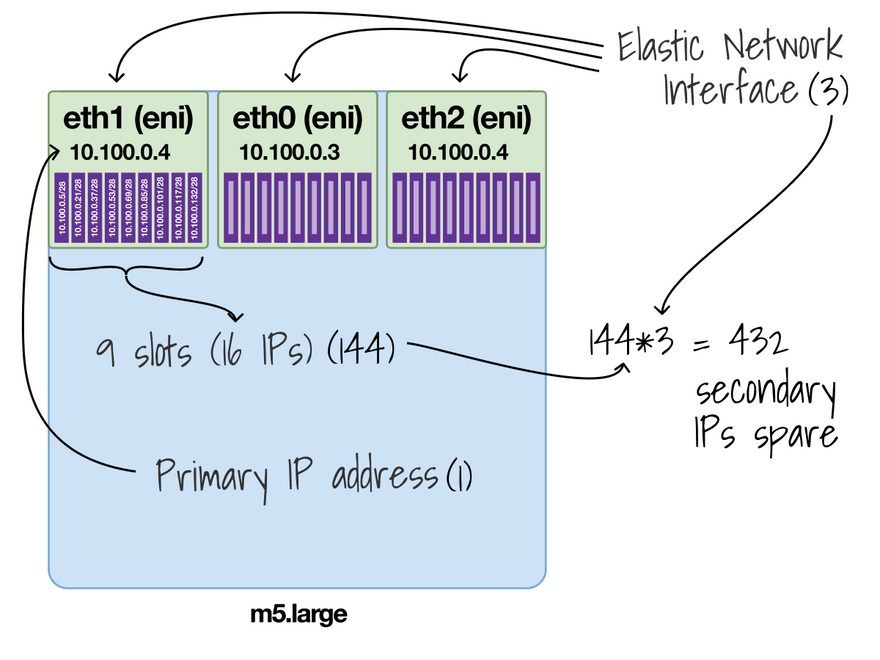

With an m5.large, you have 3 ENIs with 10 slots (or IPs) each.

Since one IP is reserved for the ENI, you're left with 9 slots.

Each slot is 16 IPs, so 9*16=144 IPs.

Since there are 3 ENIs, 144x3=432 IPs.

You can have up to 432 Pods now (vs 27 before).

The AWS-CNI support slots and caps the max number of Pods to 110 or 250, so you won't be able to run 432 Pods on an m5.large.

It's also worth pointing out that this is not enabled by default — not even in newer clusters.

Perhaps because only nitro instances support it.

Assigning slots it's great until you realize that the CNI gives 16 IP addresses at once instead of only 1, which has the following implications:

- Quicker IP space exhaustion.

- Fragmentation.

Let's review those.

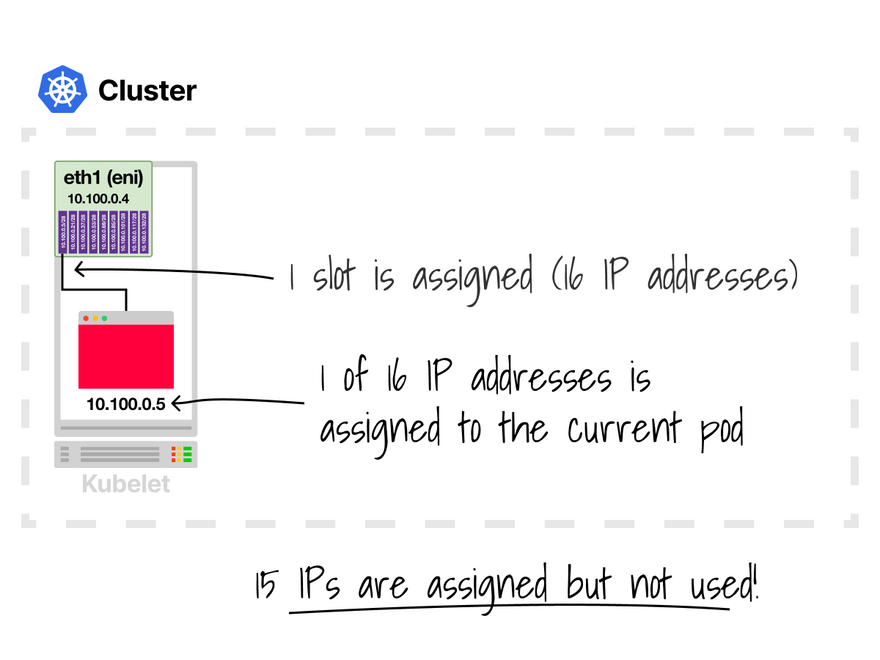

A pod is scheduled to a node.

The AWS-CNI allocates 1 slot (16 IPs), and the pod uses one.

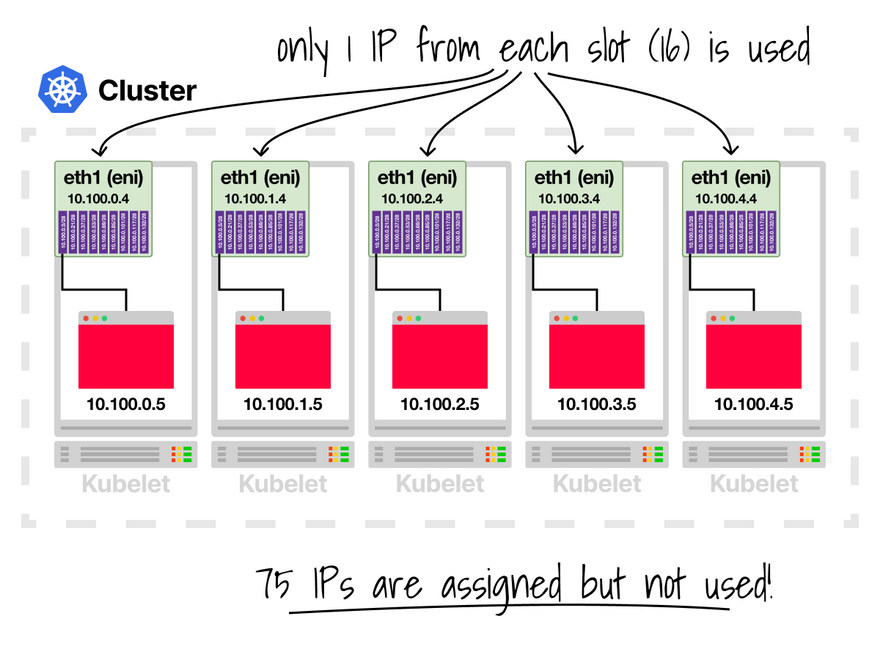

Now imagine having 5 nodes and a deployment with 5 replicas.

What happens?

The Kubernetes scheduler prefers to spread the pods across the cluster.

Likely, each node receives 1 pod, and the AWS-CNI allocates 1 slot (16 IPs).

You allocated 5*15=75 IPs from your network, but only 5 are used.

But there's more.

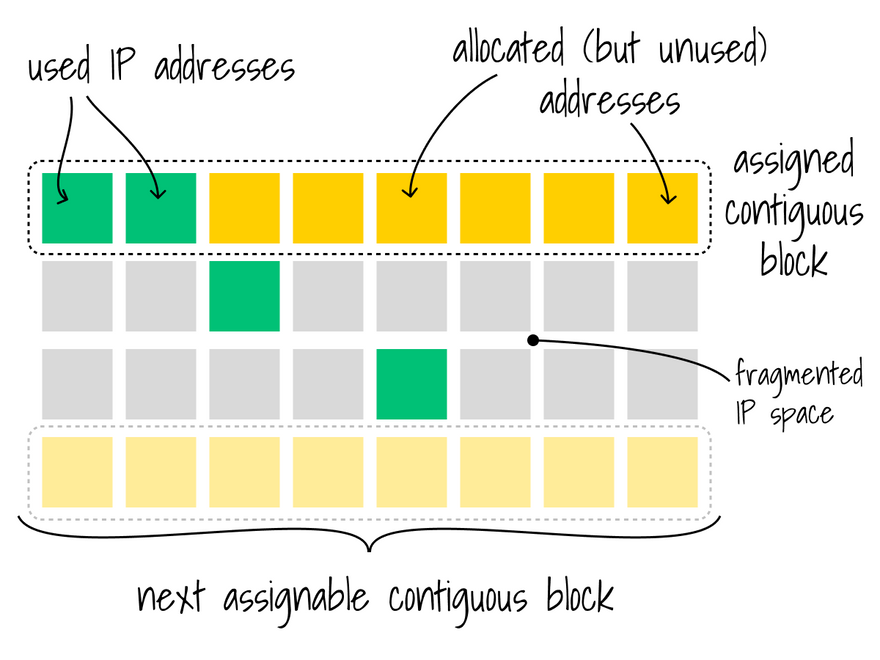

Slots allocate a contiguous block of IP addresses.

If a new IP is assigned (e.g. a node is created), you might have an issue with fragmentation.

How can you solve those?

- You can assign a secondary CIDR to EKS.

- You can reserve IP space within a subnet for exclusive use by slots.

Relevant links:

- https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/using-eni.html#AvailableIpPerENI

- https://aws.amazon.com/blogs/containers/amazon-vpc-cni-increases-pods-per-node-limits/

- https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/ec2-prefix-eni.html#ec2-prefix-basics

And finally, if you've enjoyed this thread, you might also like:

- The Kubernetes workshops that we run at Learnk8s https://learnk8s.io/training

- This collection of past threads https://twitter.com/danielepolencic/status/1298543151901155330

- The Kubernetes newsletter I publish every week https://learnk8s.io/learn-kubernetes-weekly

Top comments (8)

Really interesting, and I love the illustrations ! Thank you for the sharing

Really insightful explanation—I liked how you broke down IP and pod allocations in EKS in a way that makes a complex topic more approachable. It reminded me of Estatus de la Beca Rita Cetina, where simplifying technical or detailed information is key to helping people actually use it without feeling overwhelmed. Do you think most readers prefer a high-level overview of cloud topics, or do they value the deep dive into technical specifics more?

I am interested in your post. Your insights will serve as a wonderful resource for me to enhance my understanding of this area. Running has never been more enjoyable. ragdoll hit

Más de 5 millones de familias ya se registraron para la Beca Rita Cetina. El objetivo: apoyar la permanencia escolar desde primaria con un my understanding of this area.

@slope Great insights on managing IP allocations in EKS! The explanation of ENI prefixes and their impact on pod distribution is particularly useful. Looking forward to implementing these strategies in our clusters.

@Geometry Dash Subzero

Your article is very detailed, analytical and illustrated very easy to understand. Fixing the problem when running an EKS cluster helps you avoid interruptions in operations and limit the number of pods per node to low.

Thanks for explaining the background about intra-node networking, this block blast information makes it much easier for me to visualize.

Excellent breakdown! IP and pod allocation in EKS can get confusing fast, but your explanation really clarifies how ENIs and prefix delegation work.

deltarune