Containers are a popular term in the industry and help developers develop and deploy apps a lot faster. While by using virtualization, you can run various operating systems on your hardware containerization to run multiple instances or deploy multiple applications using the same operating system on a single virtual machine or server.

This capacity to run multiple applications on the same resource summarizes into making our development life cycle efficient. In this post, we would summarize everything you must know about these unfamiliar words and how exactly containerization makes our life easy.

Let’s dive in!

What is Containerization?

Containerization is simply packaging all the required environment, libraries, frameworks, directories, and application code to create a container. Citrix defined it as:

Containerization is defined as a form of operating system virtualization, through which applications are run in isolated user spaces called containers, all using the same shared operating system (OS). A container is essentially a fully packaged and portable computing environment that already has all the necessary packages to run the application inside.

Simply put, the process of creating containers is called containerization.

What is a Container?

The word container has its origin form contain, and it does the same thing, i.e., it contains everything you need to run an application.

Containers packages and contains code and all its dependencies, so the application runs quickly, portably, and reliably from one computing environment to another.

How does a Container work?

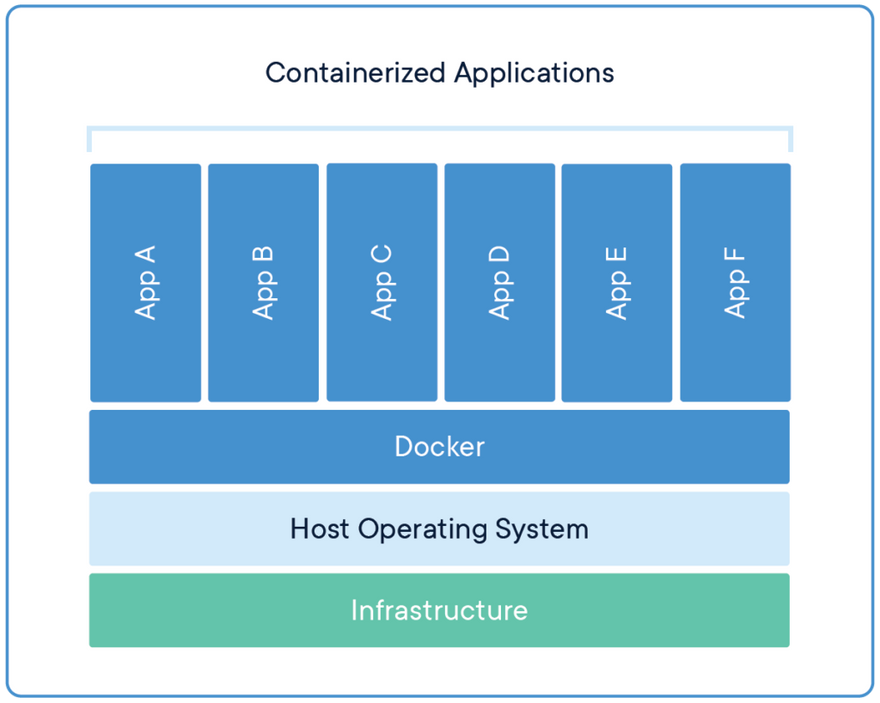

Containers run via a containerization engine over a single host operating system by sharing the operating system kernel with other containers. This sharing is achieved by limiting parts of the OS to a read-only mode. This sharing makes containers extremely lightweight on the resources as you don’t need to configure a new operating system for each new container.

Docker a containerization engine running multiple containers. Image Credits: Docker

As you see, containers differ a lot from virtual environments (you’d need a separate OS per system here), and if you’d want to know more, we have put a blog discussing the core differences elaborately.

We would also discuss why VMs are more secure than containers and other drawbacks in the same blog.🤯

Why do you need Containers?

Development is a complicated task, and as a developer, you need to tackle issues and keep in mind many things while you develop. Containers help you keep your focus on the code and care less about other substances like the environment during development.

A few reasons you should use a container to make your dev life easy are:

Consistent Environment

Development is a critical task, and you must take into account a lot of factors ranging from libraries, frameworks to network configuration or directory management while deploying the application. Think about how different Linux and windows manage their directories.

Problems arise when the supporting software environment is not identical, says Docker creator Solomon Hykes. “You’re going to test using Python 2.7, and then it’s going to run on Python 3 in production and something weird will happen. Or you’ll rely on the behavior of a certain version of an SSL library and another one will be installed. You’ll run your tests on Debian and production is on Red Hat and all sorts of weird things happen.”

Containers have the opportunity for developers to build predictable environments isolated from other applications. The application’s software dependencies can also be contained in containers, such as particular versions of programming language runtimes and other software libraries.

This all turns in favor of a developer. The focus stays on quality code and not on the bugs that might creep while migrating code from his system to the server.

Less overhead

A container can be just tens of megabytes in size, while it may be several gigabytes in size for a virtual machine with its entire operating system. Because of this, far more containers than virtual machines will hold a single server, which boils down to consuming less resources for one individual container.

Just In Time

It can take several minutes for virtual machines to boot up their operating systems and begin running their host applications. At the same time, it is possible to start containerized applications almost instantly. That implies that when they are needed, containers can be instantiated in a “just in time” mode and can disappear when they are no longer required, freeing up resources on their hosts.

Modularity

Containers can split applications into modules (such as the database, the application front end, and so on) instead of running an entire complex application within a single container.

This is the method of so-called microservices. It is simpler to handle applications designed in this way because each module is relatively easy, and improvements can be made to modules without the whole application needing to be rebuilt. Since containers are so lightweight, it is possible to instantiate individual modules (or microservices) only when required and almost immediately accessible.

Run Anywhere

Containers can run almost anywhere, making development and deployment much easier: on Linux, Windows, and Mac operating systems; on virtual or bare metal machines; on the developer’s machine or on-site data centers; and, of course, in the public cloud.

Container images like that of docker help enhance portability as they are widespread and well supported.

You can make use of containers anywhere you want to run your apps.

What is Container Image?

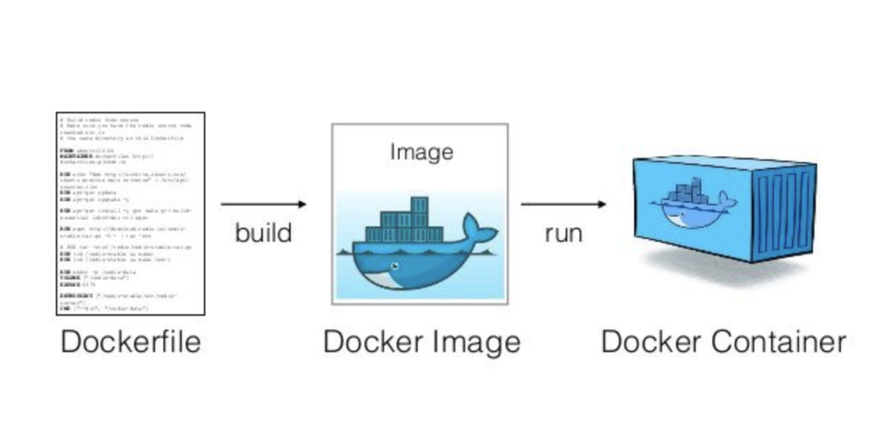

To rebuild a container, you need some file or a template that contains the instructions of what the replication must include.

Container images are the same templates that help you to rebuild a container. The template consists of unchangeable static files that can be shared, and upon building, using the shared image yields a similar container.

Docker Image is an example for Container Image used to build containers.

An image comes in handy when you need to recreate a consistent working environment within another container without dealing with traditional long and boring manual configurations.

What are the popular Container Engines?

Docker

Docker’s open-source containerization engine is the first and still most popular container technology among various competitors. Docker works with most commercial/enterprise products, as well as many open-source tools.

You can read more about docker and docker images in this curated beginner-friendly post.

CRI-O

CRI-O, a lightweight alternative to docker, allows you to run containers without any unnecessary code or configuration, directly from Kubernetes, a container management system.

Kata Containers

Kata Containers is an open-source container runtime with lightweight virtual machines that feel and function like containers but use hardware virtualization technology as a second layer of protection to provide more robust workload isolation.

Microsoft Containers

Positioned as an alternative to Linux, Microsoft Containers supports Windows OS in very specific conditions. Typically, they run on a real virtual machine rather than a cluster manager like Kubernetes.

Final Thoughts ⭐

Containers are becoming essential as the direction of development is moving towards cloud-native. The advantages to using containers like the flexibility and agility are unmatched, and adoption is on the rise.

I hope this blog helped you understand containerization and containers in-depth, and if you want to experiment with containers, you must know more about the best practices used by various companies of all sizes while adopting containers.

Happy Containerizing!

Top comments (0)