The main objective of this blog is to learn reinforcement learning by doing. We are going to make a Mario game to play by itself using Reinforcement Learning method.

📦Packages and tools used

- Mario Environment

- OpenAI Gym interface

- OpenAI Gym toolkit

- Py Torch

- PPO Algorithm

- Policy Optimization

⚙️Setup a mario environment

pip install gym_super_mario_bros nes_py

We are Installing gym_super_mario_bros & nes_py. gym_super_mario_bros package is used to set up our gaming environment where our Mario will save the Princess Peach 👸 from Bowser and have you remembered the controls in this game

- Left dpad – Move left, enter pipe to the left of Mario.

- Right dpad – Move right, enter pipe to the right of Mario.

- Up dpad – Enter pipe above Mario or enter door behind Mario.

- Down dpad – Crouch, Ground Pound (In the air), enter pipe below Mario.

- A button – Jump, confirm menu selection.

- B button – Jump, exit menu.

- X button/Y button – Dash (Hold), launch fireball (Fire Mario only).

Haaa good old memories now back to our topic nes-py help us to build a virtual joypad for python for our model to control Mario to do certain tasks.

⛓️Importing the required dependence

import gym_super_mario_bros

from nes_py.wrappers import JoypadSpace

from gym_super_mario_bros.actions import SIMPLE_MOVEMENT

Let's break down what does this three import does

-

import gym_super_mario_bros- is importing the game itself. -

from nes_py.wrappers import JoypadSpace- -

from gym_super_mario_bros.actions import SIMPLE_MOVEMENT- By default, gym_super_mario_bros environments use the full NES action space of 256 discrete actions. To constrain this, gym_super_mario_bros.actions provide three actions lists (RIGHT_ONLY, SIMPLE_MOVEMENT, and COMPLEX_MOVEMENT) we are using SIMPLE_MOVEMENT which has only 7 actions that help us to reduce the data we are going to process. actions are the combination of controls in the game.

(Bonus: Simplify the environment as much as possible the more complex it is the harder it is going to be for our AI to learn how to play that game. We will also convert the RGB image into a grayscale image which again helps us in reducing the data we need to be processed)

🎮Setting up the game

env = gym_super_mario_bros.make('SuperMarioBros-v0')

env = JoypadSpace(env, SIMPLE_MOVEMENT)

gym_super_mario_bros has various environments where we are going to train our model to learn and play. Feel free to check out different environments to play with. I am going to use SuperMarioBros-v0as the default classical environment.

SuperMarioBros-v0

SuperMarioBros-v2

SuperMarioBros-v3

Next we wrapper nes_py.wrappers.JoypadSpace with environmental and actions

🏃Run the game

done = True

for step in range(5000):

if done:

state = env.reset()

state, reward, done, info = env.step(env.action_space.sample())

env.render()

env.close()

The first thing we had done is done = True setting the flag to true. Tells whether the game needs to restart or not.

next looping through every single frame for step in range(5000): everything when a screen gets updated we say it to do specific actions.

To start the game env.reset().

env.step() to pass the action to the game like saying it to press a button to move right, left, etc...

env.action_space.sample() it gives random actions.

state, reward, done, info will return us some data to process with.

env.render() this allows us to show the game on the screen.

env.close() this allows us to close the game or terminate that game.

🥁result:

OSError: exception: access violation reading 0x000000000003C200if you get any of these access violation errors just restart your Kernel and run the imports again this will do the job.

🔄Preprocess the game for applied AI

📦Installing packages for preprocessing

we need to preprocess the Mario game data before we train in our AI model. Two key preprocessing steps are grayscale and frame stacking

!pip3 install torch torchvision torchaudio

Install Pytorch

you can customize your installation process according to your hardware if you have CUDA-enabled GPU then follow the steps given in Pytorch website.

if you don't have one no worries just run the above command.

!pip install stable-baselines3[extra]

Next, we are going to install stable baselines an open-source project for implementations of reinforcement learning algorithms in PyTorch they provide various RL Algorithm to work with for this Mario game we are using (PPO) Proximal Policy Optimization algorithm to train our AI

📦Importing packages for preprocessing

from gym.wrappers import GrayScaleObservation

from stable_baselines3.common.vec_env import VecFrameStack, DummyVecEnv

from matplotlib import pyplot as plt

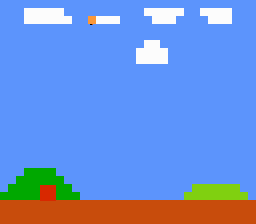

Our AI is going to take the images of the Mario game to learn

the color images (RGB) that have three times as many pixels to process so we convert them into grayscale images to process.

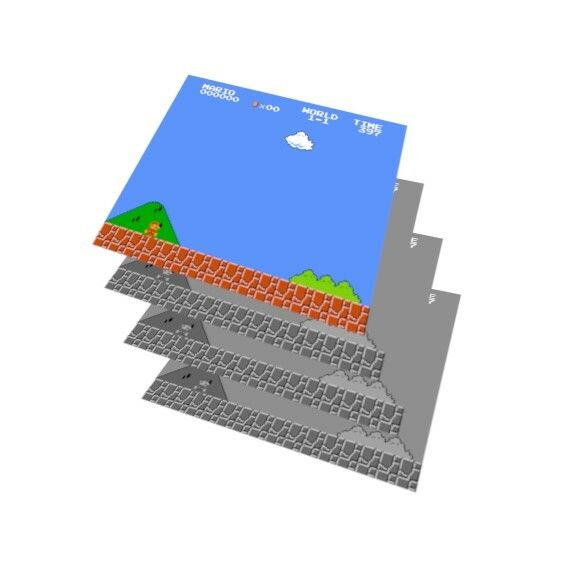

frame stacking helps our AI to have an idea of where Mario and enemies are by stacking the following frames.

🎁Wrapping the environment

env = gym_super_mario_bros.make('SuperMarioBros-v0')

env = JoypadSpace(env, SIMPLE_MOVEMENT)

env = GrayScaleObservation(env, keep_dim=True)

env = DummyVecEnv([lambda: env])

env = VecFrameStack(env, 4,channels_order='last')

GrayScaleObservation(env, keep_dim=True) - This commend helps to convert our environment from RGB to grayscale. keep_dim=True is used to obtain the final channel and it helps in frame stacking.

This process helps to reduce the number of pixels we need to process Let's take a quick look at how the color image contains.

RGB Image (240*256*3)=184320pixels to process

Grayscale (240*256*1)=61440pixels to process

env = DummyVecEnv([lambda: env]) - We wrap all the images in a dummy vectorization environment each time we run this step the shape of the data gets changed.

env = VecFrameStack(env, 4,channels_order='last') - We pass our preprocessed environment and how many frames we are going to stack in our case 4 you can add more if you need to and finally where our channel order is specified at by channels_order='last'.

stack shape is represented at the end.

code:

state = env.reset()

state.shape

output:

(1, 240, 256, 1)= one channel

(1, 240, 256, 4)= four channels

Running the game

state = env.reset()

To get our current state in the game (basically the snapshot of the game at an index)

state, reward, done, info = env.step([5])

this step is explained in the Mario setup phase you can check above or if you are lazy enough to scroll to the top and find the specific para the same content is repeated below.

env.step() to pass the action to the game like saying it to press a button to move right, left, etc...

env.action_space.sample() it gives random actions.

In the above code, we give env.step([5]) herein SIMPLE_MOVEMENT array index:

[['NOOP'],

['right'],

['right', 'A'],

['right', 'B'],

['right', 'A', 'B'],

['A'],

['left']]

5th index action is to jump. just for making sure everything works fine, we are checking it out.

state, reward, done, info is the reward function in the environment the objective of the game is to move as far right as possible, as fast as possible, without dying.

-

state- Our current state in the environment -

reward- A positive score gives when our Mario performs well in the game else NOPE. Our main goal is to maximize the total rewards. -

done- It says whether Mario is dead or not, the game is over or not... -

info- we get some information about the environment like{'coins': 0, 'flag_get': False, 'life': 2, 'score': 0, 'stage': 1, 'status': 'small', 'time': 400, 'world': 1, 'x_pos': 40, 'y_pos': 79}

🖼️ Frame Stacking

plt.figure(figsize=(20,16))

for idx in range(state.shape[3]):

plt.subplot(1,4,idx+1)

plt.imshow(state[0][:,:,idx])

plt.show()

This process is just to visualize how the stacking process works.

Top comments (7)

For those interested in online gambling in Germany, this platform delivers a well-rounded experience that combines reliability with entertainment. Its design is both sleek and functional, allowing easy access to slots, table games, and live casino events. Checking out verdecasinodeutschland.com.de/ provides insight into how the platform structures its promotions and ensures safe transactions. It’s an attractive option for players who value transparency and smooth gameplay.

I never really thought about games in this frame. It’s clever but also a bit of a rabbit hole. Makes you appreciate how much effort goes into modding beyond just “cheats,” especially when you consider stability and updates. I’ve dabbled with mobile games enough to know that one wrong tweak can crash everything, so it’s not something to jump into lightly. On a lighter note, I’ve been exploring the 1winbetting.in/app/android/ that I downloaded while being in India, mostly through casual game-based bets. So far so good, getting ready to pick bonuses and place bets.

It’s awesome how much you can learn from reinforcement learning experiments like this — especially when applied to games. I actually went down a similar rabbit hole with Valorant, but from a different angle. I was hitting a plateau in ranked and wanted to focus more on strategy than endless grinding. That’s when I tried eloboss.net/valorant-boosting it gave me some breathing room to study pro-level plays and patterns without losing my mind in ranked hell. Helped me get better while still enjoying the game, which is honestly rare.

I have been exploring real estate options from my home in Chandigarh and this development stands out for its exceptional planning. The way Bahria Agro Farms bahriaagrofarms.com/ integrates modern amenities with a lush natural environment is truly impressive and unique. The wide roads and secure gated entry give me great confidence in the safety of the area for my family. It is rare to find such a well organized project that offers both a peaceful lifestyle and strong investment value in today market. I highly recommend this to anyone looking for a premium farmhouse experience.

This was a fantastic deep dive into reinforcement learning using Super Mario! I came across similar educational tech content on rewazea.com, but this blog does a great job blending coding with nostalgia.

wow

I never really thought about games in this frame. It’s clever but also a bit of a rabbit hole.